Michael Yang – Applied Science, Year 2

Abstract

Visually impaired people face difficulties navigating through physical environments every day. This project explores a cost-efficient solution using efficient computer vision models on low-cost hardware, such as a Raspberry Pi, to provide real-time object detection. A computer vision model was fine-tuned to recognize a single object and deployed on a Raspberry Pi 4. The model was evaluated and achieved an accuracy of 96% and an average detection time of 0.6229 ± 0.02 seconds per detection. These results demonstrate the feasibility of deploying lightweight computer vision models for assistive technology.

Introduction

People who are visually impaired face difficulties in their lives every day navigating through physical environments such as crowded streets and buildings. With 39 million people blind and 246 million people with impaired vision in the world, the need for assistive technology is significant (Walle et al., 2022). These devices aim to help visually impaired people interact and navigate with their surroundings by providing them with information through audio or touch feedback. While traditional tools such as white canes and guide dogs remain very important, modern assistive technology can help enhance the lives of those who are visually impaired by leveraging artificial intelligence and computer vision to detect and recognize objects in their surroundings.

There have been multiple papers exploring the use of technology to assist someone who is visually impaired. Several papers proposed a method of using sensor-based devices to detect obstacles in a visually impaired person’s surroundings. In a study by Elmannai et al. (2017), ultrasonic and infrared sensors were used to detect obstacles and provide feedback to the user in real-time. These sensor-based devices were effective at detecting obstacles in the user’s surroundings, but they lacked detailed information about the environment, such as specific objects.

While sensor-based devices can be used to assist the visually impaired, assistive technology can also use AI to significantly advance the technology, enabling things that a purely sensor-based device cannot do, such as complex object recognition. A convolutional neural network (CNN) is a type of artificial neural network designed to automatically detect and learn patterns in images (O’Shea & Nash, 2015). Using a CNN that is trained with images of relevant objects can assist a visually impaired person (Joshi et al., 2020). This method, proposed by Joshi et al. (2020) has an average accuracy of 95.19% for object detection and 99.69% for object recognition, showing potential for using artificial intelligence and computer vision to address problems people who are visually impaired are facing. However, this requires powerful hardware to run, increasing the cost and limiting accessibility.

Wearable devices in particular are promising as they can provide someone who is visually impaired with information about their surroundings in real-time without requiring any interaction from the user. A wearable smart system can be integrated with object recognition and obstacle avoidance and provide a visually impaired person with real-time audio feedback (Ramadhan, 2018). Although this system works reliably, it utilizes multiple sensors, in addition to cellular communication and GPS modules, which can increase the weight and cost of the wearable device.

A similar system utilizing AI vision systems can be used to provide real-time audio descriptions of a visually impaired person (Brilli et al., 2024). This system integrates many features to assist visually impaired people, such as object detection, text recognition, and scene description. This system would work reliably in places with high connectivity, but that reliability would decrease at times of high latency or in places with low connectivity since it relies on connecting to an external server, which can pose a problem.

All of these advancements in assistive technology for visually impaired people leave a gap for an affordable wearable device that utilizes computer vision being run on device without relying on external servers. The solution by Elmannai et al. (2017) only utilizes sensors, which work great for obstacle detection but are unable to provide more context to the user. Solutions by Joshi et al. (2020) and Ramadhan (2018) have computer vision to do object detection, but these solutions either require powerful hardware, which would increase the cost, or have many components, which could increase the weight and cost of the wearable device. Brilli et al. (2024) have proposed a wearable device with computer vision that is cost-efficient but relies on external servers. This highlights the importance of a low-cost wearable device that is capable of real-time object recognition without relying on external servers.

This project aims to create a prototype of software that can be run on low-cost hardware that can detect objects in real time. Due to the scope of this project, this prototype will only be trained on a few specific objects that are easy to test in order to demonstrate the potential of running computer vision models on low-cost hardware. By leveraging low-cost hardware, such as a Raspberry Pi, and open-source libraries such as ONNX Runtime, this project aims to create software that can recognize objects in real-time while being run on affordable and portable hardware, allowing people who are visually impaired to navigate physical environments more easily.

Materials and Methods

Since this project is a prototype, it will only be designed to recognize a single object due to ease of testing. However, it can be scaled to include more objects in the future. The object that was chosen for this prototype is a chair.

Preparing data

A dataset of chair images were downloaded from a website called Kaggle. These images were then automatically labelled using a Python script that utilizes a high-accuracy computer vision model. The script will recognize and find where in the image the chair is. In order to speed up this labelling process, the script was run on Google Colab with a T4 GPU. A GPU will not be used in the final prototype, it will only be used as part of the training process.

Fine-tuning the model

The data produced by the high-accuracy computer vision model was used to fine-tune a fast model with lower accuracy. By fine tuning a fast model with images of the desired object, the accuracy can be increased while preserving its speed. For this project, the pre-trained model was chosen to be YOLO11n, as this model is known for its speed. Although it sacrifices some accuracy, this would not affect the prototype as the model will be fine-tuned with labelled images of the target object (a chair), ensuring that the model has high accuracy when recognizing the target object. In a Python script utilizing the YOLO software library, the pre-trained YOLO11n model was fine-tuned on the data generated by the high-accuracy model. This allows the fast model to improve its accuracy when detecting chairs while keeping it fast.

Testing the trained model

A library called ONNX Runtime was installed onto a Raspberry Pi 4 Model B with 2GB of RAM. The trained model was tested on the Raspberry Pi with a dataset of 100 images that is separate from the training data. These images will be manually chosen, and 50 of them will be images that contain chairs, while the other 50 will be images that don’t contain chairs. This is to test whether the model correctly detects a chair in images that do contain chairs and detect that it has no chairs for images that do not contain chairs. The model was tested on the entire dataset, and the average detection time and the accuracy was recorded.

Determining speed

As shown in Figure 1, the detection time for each image was calculated by recording the time before and after the detection had happened, and then finding the difference in seconds. This was calculated for each image, and then the average detection time was calculated. To determine if it is fast enough, the reciprocal of the average detection time was taken to see how many detections per second the model is capable of. If it has a performance of at least 1 detection per second, it will be considered fast enough for real time usage. The standard deviation will also be recorded since that is the uncertainty.

Figure 1. Displays the code segment used to calculate the time taken for each image

Determining accuracy

The model will be considered accurate if it correctly identifies 90% of the images in a random sample containing both images of the desired object (chair) and images that are not relevant (no chair). The accuracy was calculated by dividing the number of accurate predictions of chair and non-chair images by the total number of images in the sample.

Results

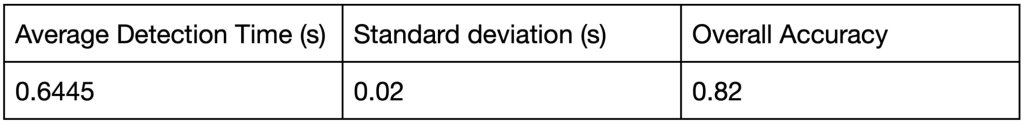

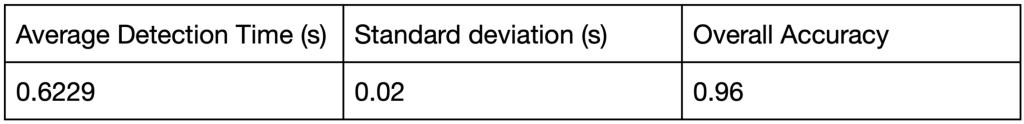

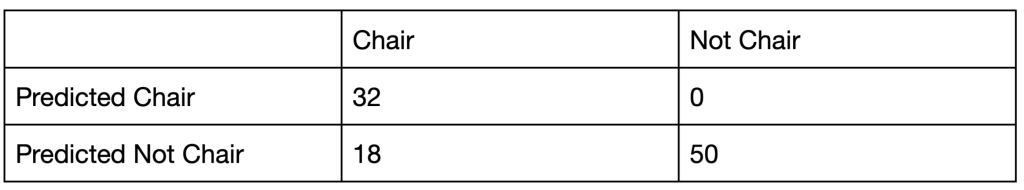

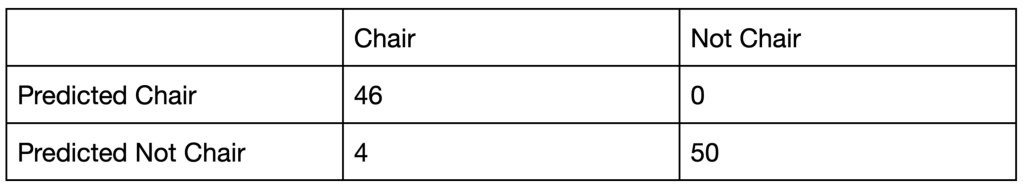

The pre-trained model without fine-tuning and the same model after fine-tuning was evaluated with 100 images, with 50 of them containing chairs and 50 not containing chairs. The tables below show the average detection time, standard deviation, and overall accuracy of the model before fine-tuning (Table 1) and of the model after fine-tuning (Table 2), followed by confusion matrices that provide a more detailed look at the model performance(Table 3; Table 4). The model before fine-tuning achieved an average detection time of 0.6445 seconds with an uncertainty of plus or minus 0.02 seconds.

Table 1. Average detection time, standard deviation, and accuracy of the model before fine-tuning

Table 2. Average detection time, standard deviation, and accuracy of the model after fine-tuning

The model after fine-tuning achieved an average detection time of 0.6229 seconds with an uncertainty of plus or minus 0.02 seconds.

Table 3. Confusion matrix for chair classification before fine-tuning

Table 4. Confusion matrix for chair classification after fine-tuning

Discussion

The model without fine-tuning met the speed threshold, but it was not accurate enough to pass the previously defined accuracy threshold. From Table 1, the average time for one detection was 0.6445 ± 0.02 seconds (1.551 ± 0.05 detections per second). This result satisfies the threshold of at least 1 detection per second. However, the accuracy of the model before fine-tuning is only 0.82, which is less than the minimum accuracy of 0.9. Therefore, while the model before fine-tuning is fast enough, it is not accurate enough.

As shown in Table 2, after fine-tuning the model, the model achieved an average detection time of 0.6229 ± 0.02 seconds (1.605 ± 0.05 detections per second) and an accuracy of 0.96. Although fine-tuning the model resulted in minimal speed improvements, as the speeds were relatively similar (both being around 0.6 seconds), the accuracy had significant increases, going from 0.82 to 0.96. This indicates that the model after fine-tuning is more accurate for detecting chairs, while having similar speed.

Looking at the confusion matrices in Table 3 and Table 4 and further analyzing the results, when testing with the sample of 100 images, all of the errors in both models occurred due to false negatives (the model not identifying a chair when there actually is one). Both models had perfect accuracy for non-chair images, but for images that did contain chairs, the fine-tuned model had great accuracy increases compared to the original model. The model without fine-tuning correctly identified chairs in 32 out of the 50 images (64%) in the sample that did actually contain chairs, while the model with fine-tuning correctly identified chairs in 46 out of the 50 images (92%). This shows that fine-tuning greatly improved the accuracy for images containing chairs, from initially only correctly identifying chairs in 64% of the images to 92% after fine-tuning. This indicates that fine-tuning helped a lot to increase the accuracy of the model, allowing it to pass the minimum accuracy threshold of 0.9.

One limitation of this project is that it only focuses on detecting a single object really well. While the model is very quick and has high reliability in detecting chairs, real world environments contain many more objects that visually impaired people need to recognize on a daily basis. In the future, this project can be expanded to include more objects, and further research needs to be done to ensure that expanding the set of objects detected doesn’t sacrifice performance.

Conclusion

This project demonstrates the potential of combining low-cost hardware with computer vision models to assist visually impaired individuals using real-time object recognition. By fine-tuning a lightweight model, such as YOLO11n, to recognize a single object and deploying it onto a Raspberry Pi 4 Model B with 2 GB of RAM, the system achieved an average detection speed of 1.605 ± 0.05 detections per second and an accuracy of 96%. These results satisfy the minimum speed and accuracy previously set.

Although the prototype currently only recognizes chairs, additional objects could be incorporated in the future through similar training processes. Future work could involve expanding the object set and optimizing for multi-object detection.

Appendix

References

Brilli, D. D., Georgaras, E., Tsilivaki, S., Melanitis, N., & Nikita, K. (2024). AIris: An AI-powered Wearable Assistive Device for the Visually Impaired. ArXiv.org. https://arxiv.org/abs/2405.07606

Elmannai, W., & Elleithy, K. (2017). Sensor-Based Assistive Devices for Visually-Impaired People: Current Status, Challenges, and Future Directions. Sensors, 17(3), 565. https://doi.org/10.3390/s17030565

Jocher, G., Qiu, J., & Chaurasia, A. (2023). Ultralytics YOLO (Version 8.0.0) [Computer software]. https://github.com/ultralytics/ultralytics

Joshi, R. C., Yadav, S., Dutta, M. K., & Travieso-Gonzalez, C. M. (2020). Efficient Multi-Object Detection and Smart Navigation Using Artificial Intelligence for Visually Impaired People. Entropy, 22(9), 941. https://doi.org/10.3390/e22090941

O’Shea, K., & Nash, R. R. (2015). An Introduction to Convolutional Neural Networks. ArXiv (Cornell University). https://doi.org/10.48550/arxiv.1511.08458

ONNX Runtime developers. (2018). ONNX Runtime [Computer software]. https://github.com/microsoft/onnxruntime

Ramadhan, A. J. (2018). Wearable Smart System for Visually Impaired People. Sensors, 18(3), 843. https://doi.org/10.3390/s18030843

Walle, H., De Runz, C., Serres, B., & Venturini, G. (2022). A Survey on Recent Advances in AI and Vision-Based Methods for Helping and Guiding Visually Impaired People. Applied Sciences, 12(5), 2308. https://doi.org/10.3390/app12052308